Docker Basics

(Docker) Containers have similar resource isolation and allocation benefits as virtual machines but a different architectural approach allows them to be much more portable and efficient.

Docker Basics:

Virtual Machines

Each virtual machine includes the application, the necessary binaries and libraries and an entire guest operating system - all of which may be tens of GBs in size.

Containers

(Docker) Containers include the application and all of its dependencies, but share the kernel with other containers. They run as an isolated process in userspace on the host operating system. They’re also not tied to any specific infrastructure.

(Docker) Containers running on a single machine all share the same operating system kernel so they start instantly and make more efficient use of RAM. Images are constructed from layered filesystems so they can share common files, making disk usage and image downloads much more efficient.

Container (lightweight process virtualization) technology is not new, mainstream support in the vanilla kernel however is, paving the way for widespread adoption (Linux Kernel 3.8 - released in February 2013 - cf. Rami Rosen).

FreeBSD has Jails, Solaris has Zones and there are other (Linux) container technologies: OpenVZ, VServer, Google Containers, LXC/LXD, Docker, etc.

LXC

LXC owes its origin to the development of cgroups and namespaces in the Linux kernel to support lightweight virtualized OS environments (containers) and some early work by Daniel Lezcano and Serge Hallyn dating from 2009 at IBM.

The LXC Project provides tools to manage containers, advanced networking and storage support and a wide choice of minimal container OS templates. It is currently led by a 2 member team, Stephane Graber and Serge Hallyn from Ubuntu. The LXC project is supported by Ubuntu.

Docker

Docker is a project by dotCloud now Docker Inc released in March 2013, initially based on the LXC project to build single application containers. Docker has now developed their own implementation libcontainer that uses kernel namespaces and cgroups directly.

Docker allows you to package an application with all of its dependencies into a standardized unit for software development.

Docker containers wrap up a piece of software in a complete filesystem that contains everything it needs to run: code, runtime, system tools, system libraries - anything you can install on a server. This guarantees that it will always run the same, regardless of the environment it is running in.

Docker containers run on any computer, on any infrastructure and in any cloud.

LXC vs. Docker

Both LXC and Docker are userland container managers that use kernel namespaces to provide end user containers. We also now have Systemd-Nspawn that does the same thing.

The only difference is LXC containers have an an init and can thus run multiple processes and Docker containers do not have an init and can only run single processes.

Docker

Containers isolate individual applications and use operating system resources that have been abstracted by Docker. Containers can be built by “layering”, with multiple containers sharing underlying layers, decreasing resource usage.

Typically, when designing an application or service to use Docker, it works best to break out functionality into individual containers, a design recently known as micro-service architecture.

This gives you the ability to easily scale or update components independently in the future.

Having this flexibility is one of the many reasons that people are interested in Docker for development and deployment.

Advantages

- Lightweight resource utilization: instead of virtualizing an entire operating system, containers isolate at the process level and use the host’s kernel.

- Portability: all of the dependencies for a containerized application are bundled inside of the container, allowing it to run on any Docker host.

- Predictability: The host does not care about what is running inside of the container and the container does not care about which host it is running on. The interfaces are standardized and the interactions are predictable.

Docker Engine

When people say “Docker” they typically mean Docker Engine, the client-server application made up of the Docker daemon, a REST API that specifies interfaces for interacting with the daemon, and a command line interface (CLI) client that talks to the daemon (through the REST API wrapper).

Docker Engine accepts docker commands from the CLI, such as docker run <image>, docker ps to list running containers, docker images to list images, and so on.

Engine is the core of Docker and nothing else will run without it.

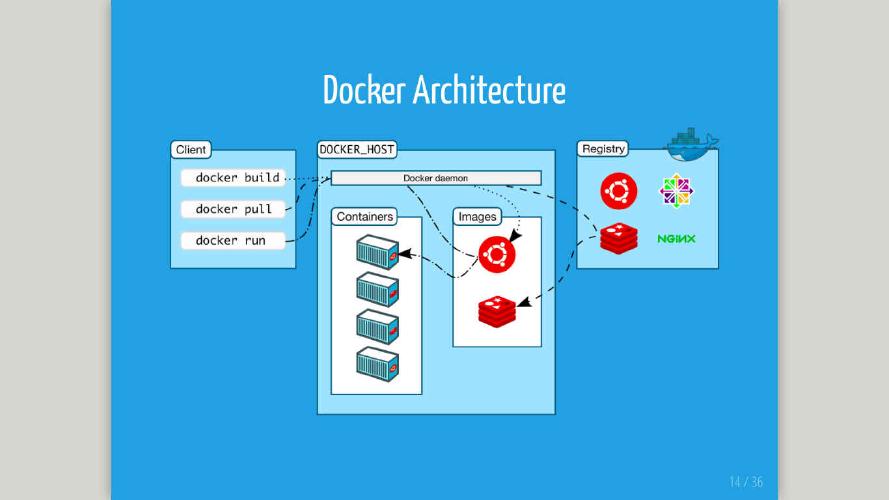

Docker Architecture

Docker uses a client-server architecture. The Docker client talks to the Docker daemon, which does the heavy lifting of building, running, and distributing your Docker containers.

Both the Docker client and the daemon can run on the same system, or you can connect a Docker client to a remote Docker daemon.

The Docker client and daemon communicate via sockets or through a RESTful API.

Docker daemon

The Docker daemon runs on a host machine. The user does not directly interact with the daemon, but instead through the Docker client.

Docker client

The Docker client, in the form of the docker binary, is the primary user interface to Docker.

It accepts commands from the user and communicates back and forth with a Docker daemon.

Docker Compose

Compose is a tool for defining and running multi-container Docker applications. With Compose, you use a Compose file to configure your application’s services. Then, using a single command, you create and start all the services from your configuration.

Using Compose is basically a three-step process:

- Define your app’s environment with a Dockerfile so it can be reproduced anywhere.

- Define the services that make up your app in docker-compose.yml so they can be run together in an isolated environment.

- Lastly, run docker-compose up and Compose will start and run your entire app.